I Always Wanted to Be a Media Theorist Who Wrote with a Telegraph Key

With a first-hand experience of observing and participating in the inception of the internet and early machine writing, Steve Tomasula reflects on his and Joseph Tabbi's interconnected history within a new form of the sublime. Using Tabbi's collected works as a framework, Tomasula explores the posthuman experience of narrative architecture.

Part I: I Always Wanted to Be a Media Theorist Who Writes in Longhand

The year 1995 is widely recognized as the watershed between the Internet as a domain of scientists and its mainstream use. In the English department where I was a grad student, and whose faculty Joe Tabbi would later join, there was a terminal room: a closet that had been recommissioned to hold a keyboard connected to the mainframe in the Computer Science Building for those few who used this new thing called email. Word processing on this terminal was possible, and an exciting leap forward, as anyone who’s ever had to compose an error-free document on a typewriter could attest, but it required using dot commands. That is, if you wanted a paragraph break, you’d have to type its code (left-arrow, backslash, [dot] p, right-arrow). Later, I’d graduated to my first PC (as they were called, to distinguish them from mainframes, the only other option), and the wonder of WISIWIG. Or as Tabbi writes, “I remember well the transition…from typewriter to computer using Microsoft Word 5.0,” and part of what made it so memorable, he later wrote, was the awareness that these machines were not simply neutral objects. It was clear that the machine was writing on us, changing how we thought (Posthumanism 76), and in retrospect, doing so faster than we realized.

Soon after, while working on my first novel, my day job was as a writer in the Satellite Division of Zenith Electronics Corporation. Though this was a multinational electronics company, I believe I was the only one in it to occasionally work from home, excitedly watching the red lights blink on the 300-baud modem I was able to wire into my PC’s motherboard—one LED for each bit of the 8-bit words it was sending—taking about 9 minutes for a 10-page document as the Internet continued to trickle into our lives.

I bring up these features of the digital landscape to highlight how forward thinking the writing of Joe Tabbi was at the time. As his first book was being published, the number of web pages grew from about 10K to 100K, rapidly swelling into first a stream (the invention of JavaScript allowed scrolling and interactivity on web pages), then a raging river (Amazon, eBay, and Netscape came online), and then the Noachian flood that followed: a “totalizing power that defies representation,” as Tabbi described it: a power and scale that is both terrifying and awe inspiring—sublime, as Romantic poets might describe a volcano, tsunami, or other force of nature (Sublime ix). Indeed, it’s not an exaggeration to say that this is the year the world changed.

And as new ages summon new prophets, they also summon new literatures.

It’s a given that artistic form and the epistemology and ontology of a time are mutually reinforcing as well as generative. Indeed, formal changes are the reason the arts have a history at all. Or as literary historian Franco Moretti concluded after using software to analyze thousands of novels, “For every genre comes a moment when its inner form can no longer represent the most significant aspects of contemporary reality…” and is superseded by forms that do (62). Often, the catalyst for transformation is the technology or science of an age as when Galileo helped move the earth from the center of the cosmos; as Darwin did by ushering humankind from the center of The Garden; as the digital and genetic advances that gave us Dolly the Sheep, and our global, electronic ecology, continue today to make individual borders ever more diffuse.

And this is my real subject today: the new literature that media theorist Joe Tabbi’s oeuvre summons. Specifically, I consider his major books: Postmodern Sublime: Technology and American Writing from Mailer to Cyberpunk (1996), Cognitive Fictions (2002), and most recently, The Cambridge Introduction to Literary Posthumanism (2024).

Taken together, they comprise a manifesto developed across 30 years, that also describes a way for the novel to remain relevant in this post-Noachian technical age.

But what I’ve found equally compelling is an offhand remark Tabbi made to me some 30 years ago: “I’ve always wanted to be a media theorist who writes in longhand.”

I use that evocative utterance as the subtitle of Part 1 of this 3-Part essay, which begins with Tabbi’s deepest level of inquiry, Cognitive Fictions. In it, Tabbi draws a parallel between what science reveals about cognition and how select novels reflect the lessons of cognitive science. Underlying this inquiry are the questions: Why—if science doesn’t support assumptions that our sense of self and world comes solely from the mind’s unconscious continual construction, revision, and reconstruction of the narrative we call our life—why is the preference for conventional, character-based narrative, a form of 19th century realism, still so prevalent? Can a reconsideration of the novel through the lessons of cognitive science renew narrative, especially the print novel, marginalized as it is by new technologies of narrative? (American Book Review 3).

It’s instructive to consider the conventional understanding of the mind’s functioning, and the concomitant character-based narrative, that was dominant at the time of Tabbi’s first book—and still is today. Its poster child is, of course, Freudian psychology with its emphasis on psychosexual development via the interplay of the conscious and unconscious mind, the anxiety inherent in the resulting tension, and the mitigation of this anxiety by various defense mechanisms that bury the real psychological reasons for one’s behavior. Mental events, or consciousness itself, often replace action as the basis for plot—e.g., the subject of a modernist novel is less about what happens than what someone thought had happened—while criticism, as Tabbi notes, often reads these texts using techniques of psychoanalysis (Cognitive 36). Symbol hunting, to put it less charitably.

Though Freud was seminal in our understanding of mental states, we now understand him less as a scientist and more as a poet, describing the anxieties of his age. As Tabbi lays out in his Cognitive Fictions, the theory of mind one finds emerging out of contemporary sciences of the mind is much more empirical than Freudian psychology and has “produced detailed descriptions of…modules [of brain,] and distributed neural networks in us” (x).

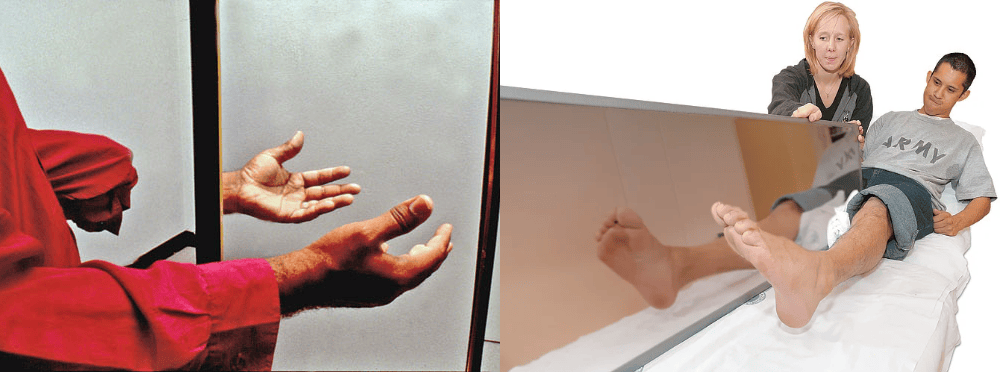

Examples that come to mind include V.S. Ramachandran’s research with phantom limb pain in which a person who has lost a leg, for example, still feels the pain in the missing leg as if it’s still there. By substituting a mirror image of the intact leg in the place of the missing leg, therapy on the intact leg can teach the patient’s brain to feel relief in the missing leg. That is, cognition as a function of brain is distributed: it involves not only the brain but also external materials and relationships (Guenther).

Cognition (perception, memory, motivation, etc.) is understood, then, more as an emergent phenomenon arising from clusters or modules of brain activity down to the level of the cell and can often be mimicked through computer simulations (Cognitive xvi-xvii). Indeed, it’s useful to note that the Large Language Models used in AI have similarities to the way neuroscientists believe the brain operates, using a “semantic hub” to integrate information from differing modalities—distinct subsystems connect eyes and ears, for example, that operate too fast for consciousness to register, which the hub receives from modality-specific “spokes”: a process that is no more available to consciousness, than the neurological pulses that allow you to read these words. In machine learning, Tabbi writes, the more layers of processing that a neural network has between input and output—with more than 3 being considered a “deep learning algorithm”—the more opaque its operations will be (Posthumanism 181): a characteristic that will also have consequences for the novel described below.

With this theory of mind as a starting point, Tabbi asks us to consider literature not as a representation of reality, but as a “means of registering experiences that emerge from a largely mediated environment” even if we are unaware of the processes that create the experiences (Cognitive xiv). “Hence,” he writes, “many of the questions I ask of literary texts … will be the same questions contemporary cognitive scientists find themselves asking. For example, ‘How do cooperation, seriality, sequentiality, and the focality of consciousness actually come about within a distributed system?’ (Cohen and Schooler 7); OR ‘How can we identify consciousness?’ (5); These are all questions that ultimately have to do with the knowability of one’s subject, by human subjects whose cognition is much broader than consciousness” (Cognitive xiv).

Yet, as Tabbi notes, consciousness “famously evades one’s grasp the moment one attempts to observe it in oneself” (Cognitive xiv)—The metaphor of the lightbulb in the refrigerator comes to mind: Is the light always on or only on when I open the door? Does opening the door, so to speak, activate consciousness or only my attention to it? The flicker between the answers implied by cognitive science—this “subjective, ephemeral” (Cognitive xiv) quality, as Tabbi calls it, is a recurrent theme in “novels that reflect on their own methods of noting phenomenal experience” (Cognitive xiv). That literature’s subjective power is also, like consciousness, inextricably caught up with its ephemeral nature has profound implications for how we value the act of writing in an era of media multiplicity.

Indeed, he argues, that the fictive presentation of cognitive operations is our time’s literary defamiliarization par excellence. “In trying to bring the full range of cultural noise and mediation to literary consciousness, such works ultimately imply more significance, more context, and more connectivity than any single mind could ever hold in experience or present on a page” (Cognitive xv).

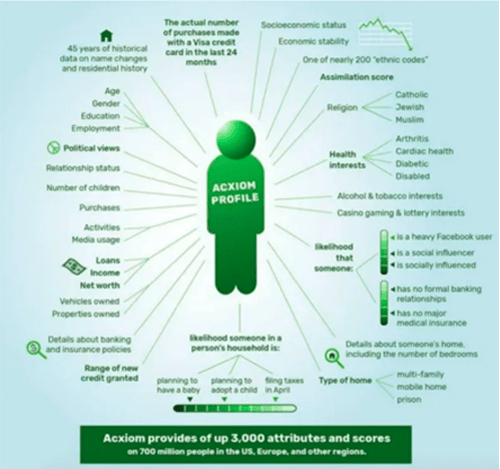

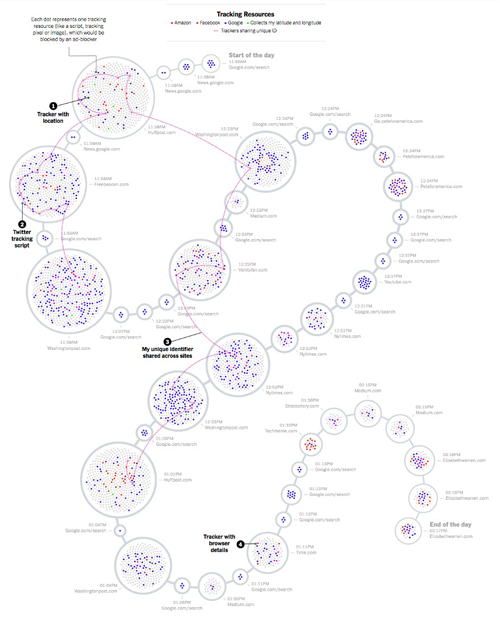

This distancing and distributing of consciousness may feel familiar to us, for as Tabbi writes, it is not confined to literature: every day, with each use of a credit card, we are “telling the man” more about ourselves than we know, as a character in [Thomas Pynchon’s] Vineland says. Or, as critic Linda Brigham wrote in an email: “Amazon.com gives me all kinds of suggestions about what to buy because IT, more than I, knows what I have bought there, and has, in its database way, given thought to the matter” (Cognitive xxi).

Like the influence of the machine that Tabbi felt moving from a typewriter to Word 5.0, like Ramachandran’s experiments with mirror therapy, the database is the phantom limb we feel aching even if we can’t see or touch it.

Still, we can try. Anyone’s cell phone could have been used to make an animation such as the one made by a Green Party candidate in Germany who hacked into his own phone to access the trove of location data that it sent back to his phone company.

In detail, it maps out his movements over a six-month period, revealing for example, where he was, how long he stayed at each location, whether he traveled by car, train or on foot, when he made or received a call or text and from whom, and many other details of life that could be extrapolated from the simple pings all phones need to communicate (Biermann).

See, for example, one drone’s-eye-view of a mass of protesters who have turned on the flashlight feature of their phone as a form of political protest in Hong Kong.

Commenting on the thousands of phones visible to the drone, investigators point out that each one also emanates an invisible beacon of location and identification data that others can eavesdrop on, and that we give away for the convenience of being able to locate ourselves on a map, order a pizza, book an Uber, check the weather, or use any of the thousands of apps that require this signal, a signal that “keeps broadcasting long after the protestors turn off their camera lights, head to their homes, and take off their masks” (Thomson and Warzel).

Yet, as powerful as location data may be, it is only one type of information harvested about each person.

Clearview AI is one company that banks digital diagrams of the millions of faces that can be found online. The websites we visit install trackers on our computers, phones and other devices that generate metrics about their users, which is gathered by data brokers such as Acxiom LLC, just one of a dizzying array of corporations that compile this information, offering fine-grain profiles of some 2.5 billion individuals as the company’s promotional material proudly explains (Privacy Bee).

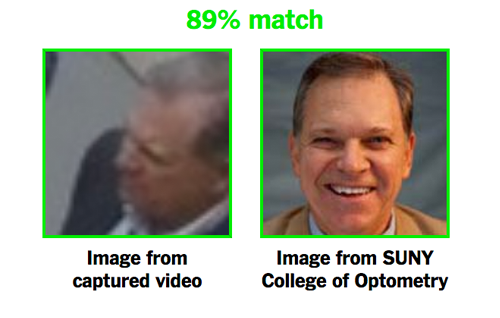

When combined at an industrial scale with databases of digital face prints, it is possible to identify and track the residents of a whole city—or whole country. Without any special training, it only took a “few minutes” for reporters from the New York Times to single out and identify, for example, celebrities and their overnight guests; protestors at Trump’s inauguration; the police who arrested them; secret service agents assigned to protect Trump (Thompson and Warzel), as well as ordinary people just going about their day, such as Dr. Richard Madonna, a professor of Optometry,

picked out at random, and identified by reporters using $60 worth of Amazon’s facial-recognition service as he passed by a public, weather camera (Chinoy). And which you can subscribe to here.

Of course, we know all of this (email is often said to be a euphemism for Everyone’s-Mail, i.e., mail anyone can read). We realize that our phone company remembers more about our movements than we do and maybe knows more about us than our spouses. For as one judge noted, simply knowing where someone travels would allow others to “deduce whether he is a weekly church goer, a heavy drinker, a regular at the gym, an unfaithful husband, an outpatient receiving medical treatment, an associate of particular individuals or political groups and not just one such fact…but all such facts” (Ball).

While we might be surprised to learn the “obscene extent” to which we are tracked, as one reporter put it after tallying the hundreds of entities that installed tracking software on his computer over the course of a single day (Manjoo)—like him, we do not give up our Internet connections, or ATM cards. We don’t stop using websites to book hotels or order tickets. Just the opposite. We ourselves engage in deeper levels of this behavior—bringing into existence “googling” as a verb; using apps that create pointillist selfies, so to speak, of sleep patterns, pastry consumption, dog-walks, location, alertness, productivity, the number of steps we take in a day, how fast our heart beats, what we and those around us are ordering in a restaurant, and dozens of other metrics: we assume we are tracked, judged, sorted, even as we are asked to rate not just our Uber driver, but practically every service employee we come in contact with.

Unlike the uber-civic citizens of Orwell’s dystopia—and this is the most telling point—the vast majority of the personal information that is available for use by others has been made available by us, voluntarily, or at least indifferently. Facebook alone accounts for 100 billion voluntarily “shared” exchanges each day (Connell 2025), all of which are “shared” with Facebook itself, of course. To give a sense of the size of this database, if this amount of memory was used to store songs, it would take 306,223 years to play them all back (300 petabytes of memory/three-minute songs).

The degree to which my description of surveillance culture is familiar, even quotidian, is one indicator of the degree to which the humanist sense of the individual, made possible by the concepts of separation and privacy, is becoming obsolete. We may not have reached the point expressed by Facebook founder Mark Zuckerberg: that privacy is “no longer a social norm,” i.e., no longer expected, or even wanted (Johnson). But the view of computer scientist Arvind Narayanan seems accurate: according to him, the amount of data that each of us generates just by living, the incentives to mine this data, and the increasing power and decreasing cost of the tools used to do so have already brought us to the point where anonymity of any kind may be algorithmically impossible (Tucker).

As Tabbi puts it, what were once local experiences in everything from shopping to human relationships have been expanded by the database: a distributed memory that is less part of our consciousness than something larger than the individual, something that is everywhere and nowhere, a hyper object like capitalism that knows more about us and influences our actions. And cognitive fictions, as Tabbi terms those fictions that reflect this state of distributed cognition, do trace a kind of book death. But only in the humanist sense of the novel as conventional humanist assumptions are undermined by new technologies, replaced by the hive mind and social formations they engender, as well as the social/cultural systems we use to perceive and construct reality.

Except for Thomas Pynchon and Richard Powers, the authors that Tabbi parses for signs of writing under the sign of our digital phantom limb don’t address it thematically. But that does not prevent these authors from registering the effects of what it feels like to live in a world where consciousness itself is increasingly distributed and so threatens to escape the experiential constraints that were once taken for reality. They do so not by expanding into new media, “and so lose their distinctive outlines,” nor by retreating into the “reflexive maze of a mind thinking about itself thinking” (Cognitive xx). Rather, they refashion themselves to answer the challenge of new media through what Richard Grusin and J. David Bolter call “remediation.” Beyond the mere repurposing of one older medium within another (e.g., the movie version of a novel), remediation is a process of reconceptualizing an older media in the context of the new, or in the case of the novel, the context of a new media landscape, and a more distributed sense of cognition, and material (Cognitive xx). Think here of how photography was once proclaimed to be the death of painting, or movies were predicted to be the death of theater. Instead, once freed of its documentational role, painting became more painterly; theater became more theatric. Likewise, new media might compel the print novel to be more “printerly.”

This is one of the ways I understand a media critic who writes in longhand. Those of you who are old enough to remember paper phone-books and maps and dictionaries will understand how the print that remains after the Gutenberg parenthesis will be more printerly: a medium more suited than the digital, as Tabbi writes, for “sustained memory — and long-term subjective interactivity” (Posthumanism 53).

I remember being in a rare-book room and holding a sheet of music—a clear example of the kind of printerly notation Tabbi refers to. The music was composed by Schumann, or some other famous person—that wasn’t important—what mattered was how clearly the rhythm of writing in longhand seemed to materialize and be present in the room: the first notes were jet black on the page, then as the hand continued to write, the notes gradually grew fainter, until suddenly they got strong again because that was the point at which whoever was writing had obviously dipped the quill he or she was using into the inkwell to recharge it. And so it went, line after line, all the way down the page, strong black notes followed by notes that grew progressively fainter until the pen was dipped, and the cycle began anew with a jet-black note. If I half-closed my eyes, the lines appeared as the kind of grey spectrum that art students used to practice, over and over, rhythmically, like music itself, like waves at the beach, or rather waves in time and motion, the contemplative rhythm of someone engaged in the close work of writing, or rather, copying: the pattern was too uniform to be composing—and it conjured a whole way of life that has vanished: a world where being literate meant learning how to trim the nib of a feather, and shape it to create different fonts (square, blunt end for gothic; tapered with just the right amount of springiness to write in italics); they would have carried a ‘pen’ knife to sharpen the quill when it dulled; and there would have been shops to buy them in… But of course, all those shops are now ghosts too…

“I need to adjust my assumptions about the real when communicating with someone who came of age in a different discourse network,” Tabbi wrote, “even as a letter sent to a friend without e-mail forces me out of one system (with its expectations about response time, attentiveness, the likelihood of the message being saved, etc.) and into another, older system.” Likewise, the “potential for entering into a relationship changes” when phones acquire Caller ID and “I no longer risk interrupting another person but instead expose myself to that person’s scrutiny” (Cognitive xx). The Caller ID of telephones redistributes romantic power, in other words. But I wish I could time travel to tell the Joe Tabbi of 1996, Man, you ain’t seen nothing yet! Wait till you get to the age of the algorithm. Swipe left.

Part II: .. / .- .-.. .— .- -.— … / .— .- -. - . -.. / —- / -… . / .- / — . -…- / - … . — .-… - /.— … — / .— .-. — - . / .— .. - … / .- / - . .-… —. .-. .- .—. … / -.- . -.—

(Translation: I’ve always wanted to be a media theorist who wrote with a telegraph key.)

Following Slavoj Žižek, Tabbi would describe the distributed reality summarized above as a “material reality…that ‘in reality’ is wholly simulated” (Žižek 31): a hyper object or “sublime object” that is neither wholly mind nor wholly body but an “immaterial corporeality” (18)” (Sublime 10). A city, as Tabbi notes, that is no longer walkable, and so no more available to the flâneur than is the realism of a Balzac, Dickens, Dreiser, or Dos Passos (Sublime 22).

It is, however, available to the digital flâneur or flâneusse, the doom scrollers, the web crawlers, the trolls, the influencers and their followers, the Large Language Models that both read and generate these digital landscapes. Indeed, Tabbi writes, “one could hardly find a more contemporary occasion for the sublime than the excessive production of technology itself. Its crisscrossing networks of computers, transportation systems, and communications media, successors to the omnipotent “nature” of nineteenth-century romanticism, have come to represent a magnitude that at once attracts and repels the imagination”: The Postmodern Sublime (Sublime 16).

Since Tabbi coined that phrase, our world has become even more sublime in its degree of interconnectedness, virtual reality, decentralization, absent centers, dissolving categories, heterogeneity, genre blurring and genetic mixing, surveillance and meta-awareness at a scale that far exceeds that of the postmodern. The global is literally local not just metaphorically so, and the evidence can be seen in phenomenon as diverse as global warming, or a local hospital’s operations held for ransom by hackers an ocean away. Ever more frequently, Tabbi writes, “architects and engineers are coming to rely on computer simulations to reconstruct the sensual experience that is felt increasingly to be passing from ordinary life. And when the body is denied an integral…place in the ordinary lifeworld, it has a tendency to become itself the scene of writing and self-expression” (Sublime 22).

For though I’ve focused on the phantom limb of distribution and databases, the postmodern sublime Tabbi describes could also be tracked through bodies: from a time when biology was synonymous with destiny to today, a time when people no longer need go through life with the nose or chemistry they were born with but assume that their bodies, even their DNA, can be edited, patented, and rearranged while progress in bioengineering, genetically engineered animals, 3D bio-printed body parts, pharmaceuticals, and industrially grown biomaterials are rapidly bringing body modifications to entire populations (Buckles).

And of course, all of this border crossing is being accelerated by AI. It need not be pointed out how rapidly AI is erasing both material and metaphoric borders in every aspect but especially in terms of channels of communication and relations between humans. As AI becomes a more significant part of our culture, it’s becoming harder to not be a participant in the grand experiment in simulated reality or immaterial corporality that we are living. We use AI in ways that are as seemingly innocuous as letting it complete our sentences to those that foretell a dark future: in simulated dogfights, American fighter pilots are regularly wiped out by AI-piloted jets (Lipton).

Quotidian life now includes the fact that all viral posts on social media contain AI-generated comments and reposts. Many of the “people” seen on social media sites such as Instagram are AI-generated models. Google searches return AI images and articles, while marketers flood the Internet with AI-generated reviews and influencers. Even mainstream periodicals publish AI-generated articles under the byline of AI-generated authors and use AI-generated voice actors to read their audio articles (Hoel). So pervasive are AI-generated or altered realities that they range from nude photos high-school students make of their classmates by using “declothing” apps (Ryan-Mosley) to “documentation” in court cases and peer-reviewed scientific journals, and for example, the “gold standard” report on chronic illness issued by the US Department of Health and Human Services that cited AI hallucinated studies (Gedeon). Indeed, AI-generated content is becoming so ubiquitous that the companies that make these tools are concerned that their models could implode since they continually use this sea of fakes, simulations, and misinformation to learn the content upon which future results will be based (Hoel).

My point in this expansion of what Tabbi termed early on as the Postmodern Sublime is to underscore how the dominant social formations and material facts of day-to-day existence have contributed to a sense of self, and a sense of that self’s place in society, that, as Tabbi notes, is different from the Modern sense of self, or the Medieval, or Classical sense of self, we might add. As Gerald Bruns writes, “the human” has always been a “literary concept,” a discursive formation like “the divine” (3, 5). And today, societal pressures that have come to the fore to imagine the human as, in the words of N. Katherine Hayles, “an amalgam, a collection of heterogeneous components, a material-informational entity whose boundaries undergo continuous construction and reconstruction” (How We Became Posthuman 3). Not only is it becoming increasingly difficult to keep our sense of privacy from merging in the corridors of others, it is increasingly difficult to say where I begin and you end, materially, as well as socially, as the last pandemic highlighted (How We Became Posthuman 4).

Or, as Tabbi puts it, “when we contemplate technology … we are ultimately contemplating ourselves, for the expressive potential within the machine returns us to the source of all thought, the human mind in relation to other minds. We may not be able to comprehend the abstract network of contemporary technological forces, as writers in the tradition of 19th century, naturalistic fiction believed they could,…extrapolating from the forces contained in more visible steam, steel, and coal technologies. But this representational insufficiency does not prevent us from reflecting imaginatively” (Sublime 22). Indeed, the ramifications for how we read and write are as profound as the emergence of privacy that led to Shakespeare’s invention of the soliloquy.

Part III: Whether I Want to or Not, I’m a Media Theorist Who Writes with AI

So I asked ChatGPT about this posthumanism business, and he/she/they or it told me that art and literary periods from the Renaissance until the present can be seen as one humanist longue durée with features in one period waxing or waning in the others. When differences in degree become so marked as to be differences in kind, though, a watershed emerges, as for example between modernism and postmodernism. And in similar fashion, differences between the last 500 years of humanism and the present, or in material terms, the period from Gutenberg to the Internet, are giving rise to differences in kind that can be called posthumanism: a paradigm shift from the sense of self as a being with a unique and private mind and life to a sense of self that is more widely distributed, shared, invisible. Thus, unlike artists and authors who during modernism tried to model inner consciousness, for example, some artists and authors today experiment with moving conceptually outward: to model experience from a position where the individual is neither the center of creation nor unique and exceptional; where the narrative engine is more likely to be a concept of emergence rather than cause-and-effect; and whose characters embody a posthuman ontology of permeable borders, distributed cognition, and non-human agency in place of the unique, individual singularity, and exceptionalism of humans in the humanist novel; whose narration admits as coauthor the technological and scientific forces that bring it into existence.

In this regard, Tabbi is careful to distinguish posthumanism from Transhumanism. That is, rather than using the term posthuman to signify an “extension of human powers, i.e., the cyborgs of science fiction that often reify the most traditional humanist values, his use of the term signifies a dramatic departure from humanist and Enlightened traditions that have dominated over the last 500 years (Posthumanism 1).

Posthumanism is not just another period, though (indeed, Tabbi believes that the idea of periods is passe) (Posthumanism 2). He points to literatures of the past that have affinities with contemporary new-materialist writings, Arachne turning into a spider, Daphne transforming into a laurel tree, and all the other instances of the porous nature between humans and non-human beings in Ovid. Other literary antecedents of posthumanism would include Mary Shelley’s Frankenstein, Ralph Waldo Emerson’s “Circles,” Percy Shelley’s “Defense of Poetry,” William Wordsworth’s “The World Is Too Much With Us” and Herman Melville’s “Bartleby, the Scrivener,” and many others (Posthumanism 90).

The convergence of literature and cognition that Tabbi notes today, though, is a reason for the shift he sees toward more cognitive realism in fiction based on notation and reportability rather than representation. Recognizing conscious experience as a process of selection, he sees an autopoietic creation out of noise that is far more complex than anything yet accomplished by computer simulation (Cognitive xxii).

In brief, Tabbi writes, “one feature of a literary posthumanism is its ability to reconceive other-than-human narratives, without projecting God-like qualities onto either individual subjects or collective machinations”: a “literature that gives us less domesticity, fewer filled out characters and more world” (Posthumanism 96).

Art historian Erwin Panofsky describes how forms that cut across the idiosyncrasies of individual artists can embody an overall epistemology. His exemplar was the genre of three-point perspective painting: a form of visual representation that organized the world spatially rather than by the religious hierarchy used by medieval artists. And if we look, as Tabbi does, across the idiosyncrasies of individual artists and writers, we get a sense of some of the ways a posthuman aesthetic might be manifest. Though the forms, methods, and goals differ, an aesthetic can be seen in which isolated individuals are subsumed by groups, or the uniqueness of an individual, as in a psychological portrait, is replaced by notation and reportability, where pattern forms the ground of being and/or the material world is given agency.

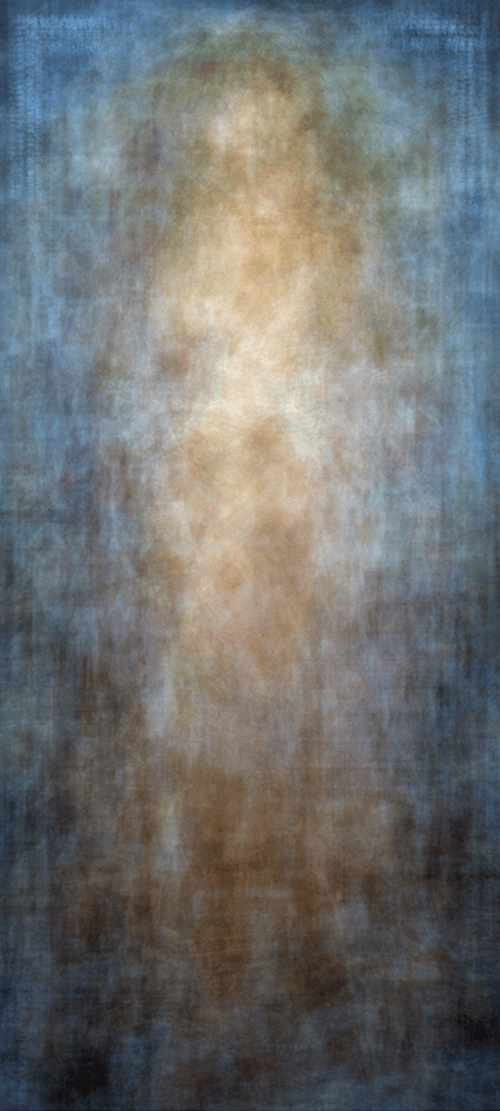

Artist Jason Salavon describes his work as a hybrid of “cultural sampling and data mining” (Franklin-Wallis) in which, for example, he averages the pixels of 10 years of nude centerfolds to create his Every Playboy Centerfold, while Eric Whiteacre assembles choirs of thousands of singers by collapsing the time and space between them.

Eduardo Kac’s plantimal—a plant-animal hybrid—is a flower created in a lab as a work of art that carries both plant and human DNA: a fusion of Eduardo’s own DNA with that of a petunia, giving the flower its eponymous name Edunia (Tomasula 287).

Also using technology to raise issues of identity, privacy, and boundaries, or lack of them, artist Heather Dewey-Hagborg walks around New York City, picking up bits of hair, chewing gum, cigarette butts, and other litter that she finds in places like the subway.

She then extracts the DNA left on these things, and from it creates 3D portraits of the people who smoked, chewed, or otherwise used them. While on the surface, the output of her practice resembles traditional portrait busts, the real subject, like those of Salavon and Kac, is obviously not representations of people, but the database of genes, and the technologies that allow the depictions of people to be made.

Likewise, in literary fiction, there is an emerging awareness that Stendhal’s metaphor of the novel as a mirror on the road of life may be less relevant to telling the story of today than a narrative that is at home with a larger-than-human scale and the agency of nonhuman actors. One that not only does not mourn the loss of the first-person narrative, but privileges the virtual over being present, or big-data patterns over individual detail; that incorporates randomness or chaos as parts of life, and privileges probability over mechanistic cause-and-effect; that takes as a given the fact that the body, the last firewall to the individual, is in the end as permeable as all barriers between the “I”, culture, and society.

Claire-Louise Bennett’s novel Pond blurs the lines between self and object, with candles, rugs, bookcases, and other things serving as non-human actors. Her “detailed presentations (of bananas, arrangements of fruit bowls on a bare window ledge, porridge, sliced almonds that resemble fingernails),” Tabbi writes, “are some of the clearest current examples in contemporary fiction of an object-oriented literary practice” (Posthumanism 121).

Though widely recognized as the first great novel of the twenty-first century, Roberto Bolaño’s 2666 (2004), provoked frustration in reviewers who tried to describe the subject in traditional plot, or character-centric terms: “The difficulty is not the novel’s heterogeneity of form,” wrote one; “The problem is that there is no single central something about which the novel attempts to speak” (Deresiewicz). The subject of Bolaño’s novel, however, is precisely its attempt to speak about a lack of a center, or the “hidden center,” as Bolaño referred to it (896): the “vast solar system of intangible structures” that “underlies society and has its influence in determining the conduct of [individuals and] society as a whole,” as social scientist James Moody described such networks (2009). It may be that 2666 is the round peg that cannot fit the square hole of traditional, humanist literature, but it maps closely onto the model of social network as narrative: a multitude of characters are introduced, only to disappear completely, turn into dead ends, or become faint traces in the web of connections that bring into existence larger patterns.

As the novel hopscotches across decades, and continents, none of the crimes it revolves around are solved; indeed, little is revealed, other than a sense of what it is to be alive today, when a desire for inexpensive appliances in America or Europe can create social circumstances that make poor women easy prey in Mexico; a time when people are both individuals and aggregates. As Sarah Kerr wrote, describing this “post-national” novel: “if 2666 contains a lesson it is that people are always from [a] confluence of factors” (16). It is, in other words, a novel where pattern forms the ground of being, though with our individual, worms-eye view of life, this too is normally invisible to us.

In his novel Measuring the World, Daniel Kehlmann imagines the naturalist Alexander von Humboldt telling the mathematician Johann Gauss that he had heard that Gauss was now concentrating on probability theory. “Death statistics,” replied Gauss. “One thought one controlled one’s own existence,” he continued, “One created things, discovered things, acquired goods, found people one loved more than one’s life, had children, maybe clever, maybe clods, watched the person one loved die, got old, got ill, and then got buried. One thought one had decided it all oneself. Only mathematics demonstrated that one had always taken the common path…” (187).

Near the end of his life, Gauss has discovered the long view, we might say, the data-narrative.

Gauss could tell his story of patterns through the form of the humanist novel, maybe as a bildungsroman of one of his children, or really from the viewpoint of any of us here—the Human Scale. Or he could tell their—and our—story from the long view: the view we have from a rising airplane. Or the viewpoint of data, as Hayles calls it (Computer 30). As we move from the viewpoint in the humanist novel to that of data, what is lost is the narrative of the individual viewpoint… What takes its place is pattern and shape: the material traces of systems, the grid of a city’s streets, the squares of fields, the law of averages, or immaterial corporality of wholly simulated realities—the mirror image of a phantom limb, the invisible data base that is coauthor to my selection of a spouse or next read. Pull out even further, and even these traces of collective action evaporate, even human time, or as we might call it, plot. Tabbi reminds us that constructing a narrative always determines what will be seen, and so what is. Our narrative architectures are no more neutral than was Galileo’s telescope and we might, as Joseph Tabbi writes, “welcome a posthuman perspective… not as an extension of our own individual agencies and cognitive capacity” but as a “revitalization of our imaginative engagements” with elements that are only partially known…” (Posthumanism 10).

Works Cited

Ball, James. “NSA Data Surveillance: How Much Is Too Much?” The Guardian, 10 June 2013, www.theguardian.com/world/2013/jun/10/nsametadata-surveillance-analysis Accessed 26 May 2024.

Biermann, Kai. “Betrayed by Our Own Data.” Zeit Online, 10 Mar. 2011, https://www.zeit.de/digital/datenschutz/2011-03/data-protection-malte-spitz. Accessed 4 July 2025.

Bolaño, Roberto. 2666. Translated by Natasha Wimmer, Farrar, Straus and Giroux, 2008.

Bruns, Gerald. “On Ceasing to Be Human.” Manuscript of the Roger Allan Moore Lecture, Department of Social Medicine, Harvard Medical School, Apr. 1998, pp. 1-25.

Buckles, Susan. “Transforming Transplant Initiative Aspires to Save Lives through Bioengineering.” Mayo Clinic News Network, 6 Mar. 2024, https://newsnetwork.mayoclinic.org/discussion/transforming-transplant-initiative-aspires-to-save-lives-through-bioengineering/. Accessed 19 Jan. 2025.

Burke, Kenneth. Counter-Statement. University of California Press, 1966.

Burt, Andrew, and Dan Geer. “The End of Privacy.” The New York Times, 5 Oct. 2017, https://www.nytimes.com/2017/10/05/opinion/privacy-rights-security-breaches.html. Accessed 19 Jan. 2025.

Chinoy, Sahil. “We Built an ‘Unbelievable’ (but Legal) Facial Recognition Machine.” The New York Times, 16 Apr. 20, www.nytimes.com/interactive/2019/04/16/opinion/facial-recognition-new-york-city.html. Accessed 4 July 2025.

Cohen, Jonathan D., and Jonathan W. Schooler. Scientific Approaches to Consciousness. Lawrence Erlbaum Associates, 1997.

Connell, Adam. “36 Top Facebook Messenger Statistics for 2025.” AdamConnell.me, 1 Jan. 2025, https://adamconnell.me/facebook-messenger-statistics/. Accessed 19 Jan. 2025.

Deresiewicz, William. “Last Evenings on Earth.” The New Republic, 18 Feb. 2009, https://newrepublic.com/article/60694/last-evenings-earth. Accessed 27 Jan. 2025.

Dewey-Hagborg, Heather. “Stranger Visions.” https://deweyhagborg.com/projects/stranger-visions. Accessed 5 July 2025.

Editorial Board. “The Government Uses ‘Near Perfect Surveillance’ Data on Americans.” The New York Times, 7 Feb. 2020, https://www.nytimes.com/2020/02/07/opinion/dhs-cell-phone-tracking.html. Accessed 19 Jan. 2025.

Franklin-Wallis, Oliver. “Eye-to-Eye with Jason Salavon’s Algorithm-Produced Art.” Wired, https://www.wired.com/story/machine-strokes/. Accessed 27 Jan. 2025.

Gedeon, Joseph. “RFK Jr’s ‘Maha’ report found to contain citations to nonexistent studies.” The Guardian, https://www.theguardian.com/us-news/2025/may/29/rfk-jr-maha-health-report-studies. Accessed 4 August 2025.

Global Observatory of Donation and Transplantation. “WHO-ONT.” https://www.transplant-observatory.org/. Accessed 27 Jan. 2025.

Goulemot, Jean Marie. “Literary Practices: Publicizing the Private.” A History of Private Life: Passions of the Renaissance, edited by Robert Chartier, translated by Arthur Goldhammer, Harvard University Press, 1989, pp. 363-95.

Guether, Katja. “‘It’s All Done with Mirrors’: V.S. Ramachandran and the Material Culture of Phantom Limb Research.” Medical History, vol. 60, no. 3, 2016, pp. 342-58, https://doi.org/10.1017/mdh.2016.27.

Hayles, N. Katherine. How We Became Posthuman: Virtual Bodies in Cybernetics, Literature, and Informatics. University of Chicago Press, 1999.

—. My Mother Was a Computer: Digital Subjects and Literary Texts. University of Chicago Press, 2005.

Hoel, Erik. “A.I.-Generated Garbage Is Polluting Our Culture.” The New York Times, 29 Mar. 2024, https://www.nytimes.com/2024/03/29/opinion/ai-internet-x-youtube.html. Accessed 19 Jan. 2025.

Johnson, Bobby. “Privacy No Longer a Social Norm, Says Facebook Founder.” The Guardian, 10 Jan. 2010, http://www.theguardian.com/technology/2010/jan/11/facebook-privacy. Accessed 19 Jan. 2025.

Kaste, Martin. “Real-Time Facial Recognition Is Available, But Will U.S. Police Buy It?” NPR, 10 May 2018, https://www.npr.org/2018/05/10/609422158/real-time-facial-recognition-is-available-but-will-u-s-police-buy-it. Accessed 22 Jan. 2025.

Kehlmann, Daniel. Measuring the World. Translated by Carol Brown Janeway, Pantheon Books, 2006.

Kerr, Sarah. “The Triumph of Roberto Bolaño.” The New York Review of Books, vol. 55, no. 20, 18 Dec. 2008, pp. 12-16.

Lipton, Eric. “A.I. Brings the Robot Wingman to Aerial Combat.” The New York Times, 27 Aug. 2023, https://www.nytimes.com/2023/08/27/us/politics/ai-air-force.html. Accessed 19 Jan. 2025.

Macpherson, C. B. The Political Theory of Possessive Individualism: Hobbes to Locke. Clarendon Press, 1962.

Manjoo, Farhad. “I Visited 47 Sites. Hundreds of Trackers Followed Me.” The New York Times, 23 Aug. 2019, https://www.nytimes.com/interactive/2019/08/23/opinion/data-internet-privacy-tracking.html. Accessed 19 Jan. 2025.

Mole, Beth. “Scientists Aghast at Bizarre AI Rat with Huge Genitals in Peer-Reviewed Article.” Ars Technica, 15 Feb. 2024, https://arstechnica.com/science/2024/02/scientists-aghast-at-bizarre-ai-rat-with-huge-genitals-in-peer-reviewed-article/. Accessed 19 Jan. 2025.

Moody, J. “More Than a Pretty Picture: Visual Thinking in Network Science.” Henkels Lecture Series on Social Networks, University of Notre Dame, 29 Oct. 2009.

Moretti, Franco. Graphs, Maps, Trees: Abstract Models for Literary History. Verso, 2005.

Orlin, Lena Cowen. The Private Life of William Shakespeare. Oxford University Press, 2021.

Panofsky, Erwin. Perspective as Symbolic Form. Zone Books, 1991.

Privacy Bee. “These are the largest data brokers in America.” https://privacybee.com/blog/these-are-the-largest-data-brokers-in-america/. Accessed 8 July 2025

Rooney, Sally. Conversations with Friends. Hogarth, 2017. Kindle Edition.

Ryan-Mosley, Tate. “A High School’s Deepfake Porn Scandal Is Pushing US Lawmakers into Action.” MIT Technology Review, 1 Dec. 2023, https://www.technologyreview.com/2023/12/01/1084164/deepfake-porn-scandal-pushing-us-lawmakers/. Accessed 19 Jan. 2025.

Salavon, Jason. “Every Playboy Centerfold, The Decades.” http://salavon.com/work/EveryPlayboyCenterfoldDecades/. Accessed 5 July 2025.

Shiff, Richard. “Cultural Trouble.” The Brooklyn Rail, Dec.—Jan. 2024—25, https://brooklynrail.org/2024/12/railing-opinion/culture-trouble/. Accessed 19 Jan. 2025.

Smith, Rachel Greenwald. “Novel Architectures.” Contemporary Literature, vol. 55, no. 3, 2014, pp. 593-99.

Tabbi, Joseph. The Cambridge Introduction to Literary Posthumanism. Cambridge University Press, 2024.

—. Cognitive Fictions. University of Minnesota Press, 2002.

—. “Cognitive Fictions: Cognition against Narrative.” American Book Review, Sept.—Oct. 2010, pp. 3-4.

—. Postmodern Sublime: Technology and American Writing from Mailer to Cyberpunk. Cornell University Press, 1995.

Thompson, Stuart A., and Charlie Warzel. “How Your Phone Betrays Democracy.” The New York Times, 21 Dec. 2019, https://www.nytimes.com/interactive/2019/12/21/opinion/location-data-democracy-protests.html. Accessed 4 July 2025.

Tomasula, Steve. “Ars [telomeres] longa, vita [telomeres] brevis: Edunia & The Natural History of an Enigma.” ASAP/Journal, vol. 1, no. 2, 2016, pp. 287-309.

Tucker, Patrick. “Has Big Data Made Anonymity Impossible?” MIT Technology Review, 7 May 2013, https://www.technologyreview.com/2013/05/07/178542/has-big-data-made-anonymity-impossible/. Accessed 23 Jan. 2025.

Watt, Ian. The Rise of the Novel: Studies in Defoe, Richardson and Fielding. University of California Press, 1971.

Whitacre, Eric. “A Virtual Choir 2,000 Voices Strong.” TED, http://www.ted.com/talks/eric_whitacre_a_virtual_choir_2_000_voices_strong#t-5412. Accessed 5 July 2025.

Wolf, Gary. “The Data-Driven Life.” The New York Times, 28 Apr. 2010, https://www.nytimes.com/2010/05/02/magazine/02self-measurement-t.html. Accessed 19 Jan. 2025.

WTSA Radio. “Surprise! You’re on Camera an Average of 238 Times a Week!” 24 Sept. 2020, https://wtsaradio.com/2020/09/24/surprise-youre-on-camera-an-average-of-238-times-a-week/. Accessed 19 Jan. 2025.

Žižek, Slavoj. The Sublime Object of Ideology. Verso, 1989.

Cite this article

Tomasula, Steve. "I Always Wanted to Be a Media Theorist Who Wrote with a Telegraph Key" electronic book review, 17 April 2026, https://doi.org/10.64773/8jz6-vi96